AI in Psychological Practice: Governance, Risk, and Responsibility

Psychology and Regulation Trends

Considerations for Regulators and Licensing Boards

“The danger wasn’t that machines would become too intelligent. It was that humans would be too quick to believe that machines were intelligent.” — Joseph Weizenbaum.

In today’s society, Artificial Intelligence (AI) is ubiquitous. Its impact can be seen in a myriad of ways, from healthcare to education to professional services. For regulators, this growing presence raises important questions about the implications for the provision of psychological services and how best to ensure public protection. Overall, it also compels deeper consideration of ethical obligations, data privacy, and the preservation of trust in the therapeutic relationship.

At the Association of State and Provincial Psychology Boards (ASPPB)® 40th Midyear Meeting in April, Ernest Wayde, Ph.D., M.I.S., addressed critical governance and accountability issues in his keynote, “Who is Responsible? Governing Psychological Practice in an Era of Artificial Intelligence.”

AI and the Practice of Psychology

AI is already embedded in clinical workflows, with 56% of practitioners reporting use within the past year. Its primary functions—pattern recognition, drafting documentation, summarization, and early risk detection—are assistive rather than autonomous.

However, these systems operate through probabilistic language generation rather than clinical reasoning. They lack judgment, ethics, and accountability. As such, their output cannot replace the core responsibilities of licensed psychologists, particularly in decision-making and patient care.

“AI is a tool, a great tool that can be very helpful and useful, but it has no accountability, no responsibility, no moral code, it has no licensure,” said Dr. Wayde. “Who is ultimately responsible for the use of this technology, and how are regulatory bodies rising to provide guidance on a rapidly evolving technology?”

Responsibility and Accountability

For regulators, one principle remains clear: accountability does not shift to AI. The licensed psychologist remains fully responsible for all aspects of care, including any AI-supported processes.

This becomes especially critical when harm occurs. If AI informs or influences a clinical decision, regulators must still evaluate the practitioner’s role—how the tool was used, whether its outputs were critically assessed, and whether professional standards were upheld.

Key Governance Challenges for Regulators

The integration of AI raises a set of pressing governance questions that boards and jurisdictions are beginning to address more explicitly:

- Accountability from Harm: When AI-guided care causes harm, responsibility is attributed to the practitioner. However, it is up to regulators to determine how to assess decision-making in cases where AI played a role.

- Boundaries of Use: There is a critical gap in defining where AI use is appropriate and where it is not. This includes its role in assessment, diagnosis, documentation, and clinical judgment.

- Standards for Validation: There is currently limited consensus on how clinicians should verify AI-generated outputs. Regulators must consider what constitutes sufficient review and validation.

- Documentation Requirements: When AI is used, should it be disclosed in clinical records? If so, under what circumstances and with what level of detail? These are important considerations for governing bodies that must remain flexible and adjust parameters as AI continues to evolve in sophistication and they steadfastly advance in their duty of public protection.

- AI Literacy Expectations: Both practitioners and regulators should establish a baseline level of AI literacy. Without it, meaningful oversight and enforcement could become difficult.

Immediate Actions for Boards and Colleges

While long-term frameworks are still developing, Dr. Wayde offered several concrete steps regulatory bodies can consider taking to strengthen oversight:

- Coordinate Across Jurisdictions: Collaboration through organizations such as the Association of State and Provincial Psychology Boards can promote consistency and shared standards.

- Define AI Literacy Thresholds: Establish clear expectations for what practitioners and board members should understand about AI systems, their limitations, and risks.

- Build Capacity: Invest in education and training to enhance regulators’ AI literacy.

- Adopt a Position on Accountability: Clearly articulate that the boundaries of responsibility for AI use remain with the licensed professional.

- Issue Documentation Guidance: Provide direction on when and how AI use should be recorded in clinical documentation.

When a Complaint Involves AI

Regulators should also be prepared for complaints involving AI-assisted practice, Dr. Wayde underscored. When such cases arise, investigations may need to address new lines of inquiry:

Key questions to examine

- Was AI involved in the service provided?

- Did the practitioner review and critically evaluate the AI-generated output?

- Is the clinical documentation accurate and complete?

Equally important are potential gaps

- Is there any disclosure that AI was used?

- Is there evidence of independent clinical judgment?

- Are there applicable AI-specific standards to guide evaluation?

In many cases, these elements may be absent, reflecting the current lack of formalized guidance and consistent practice standards.

Regulatory Focus Moving Forward

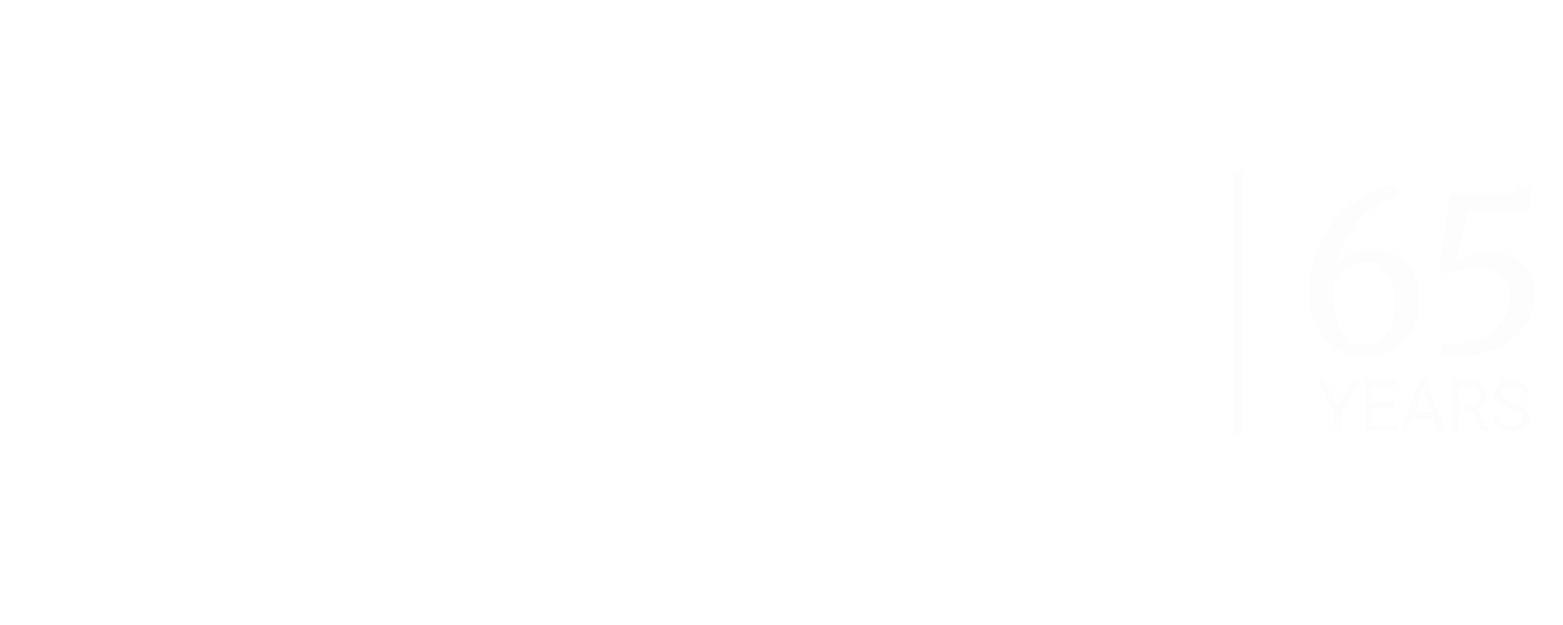

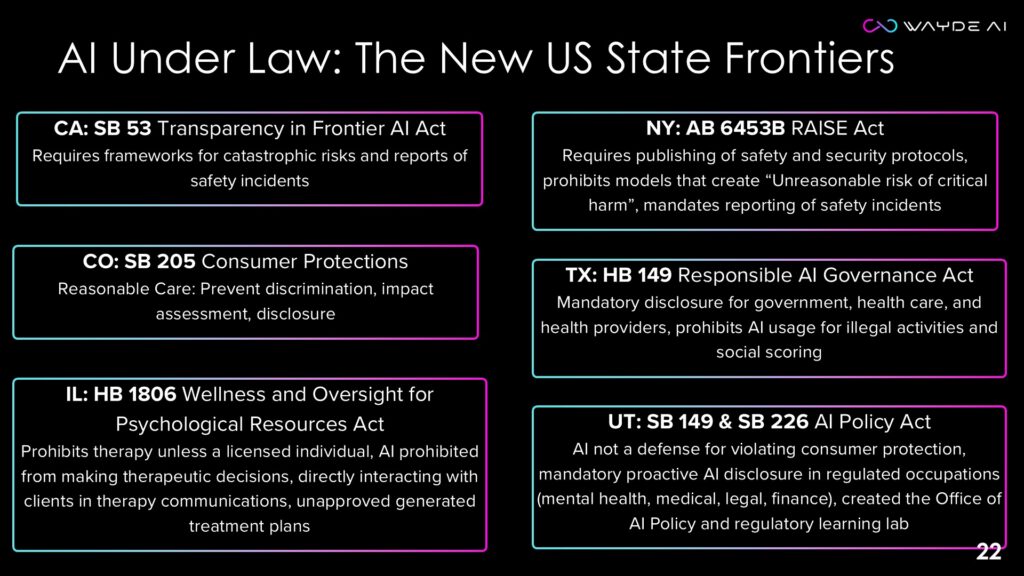

Despite the novelty of AI, the regulatory mandate remains unchanged: protect the public by ensuring competent, ethical, and accountable practice. AI does not alter this mandate—it intensifies it. These slides illustrate some of the laws that may impact the practice of psychology and AI.

Furthermore, regulators must stay up to date with laws in both the U.S. and Canada, while keeping in mind that regulatory bodies govern people, not AI.

As adoption accelerates, regulators face a dual challenge: enabling responsible innovation while ensuring that professional standards are not diluted. Addressing gaps in guidance, literacy, accountability, and privacy will be essential to maintaining trust in psychological services in an AI-enabled future.

About Dr. Ernest Wayde

Dr. Ernest Wayde operates at the intersection of human behavior and advanced technology, partnering with organizations to educate, strategize, and execute successful ethical and responsible AI adoption. His unique background combines technological expertise with a deep understanding of human behavior and organizational dynamics.

With a master’s in Information Systems from Wright State University, Dr. Wayde has established himself as an AI authority through extensive certifications from the Microsoft Academy and the MIT Sloan School of Management in Artificial Intelligence: Implications for Business Strategy.

His doctorate in Clinical and Cognitive Psychology from the University of Alabama provides valuable insight into the human factors critical to successful AI integration.

Dr. Wayde specializes in guiding organizations through technological transformation, addressing both technical requirements and organizational readiness. His methodology combines cutting-edge AI knowledge with proven organizational development principles, ensuring ethical and sustainable AI implementation that drives strategic growth. To learn more about Dr. Wayde, visit https://www.waydeai.com/

Explore topics

Related